We recently built four AI agents to handle conversations on our website. Not as a product demo or a proof of concept, but because we genuinely needed help answering the same questions over and over while the team was heads-down building.

The interesting part wasn't the technology. It was designing the personalities.

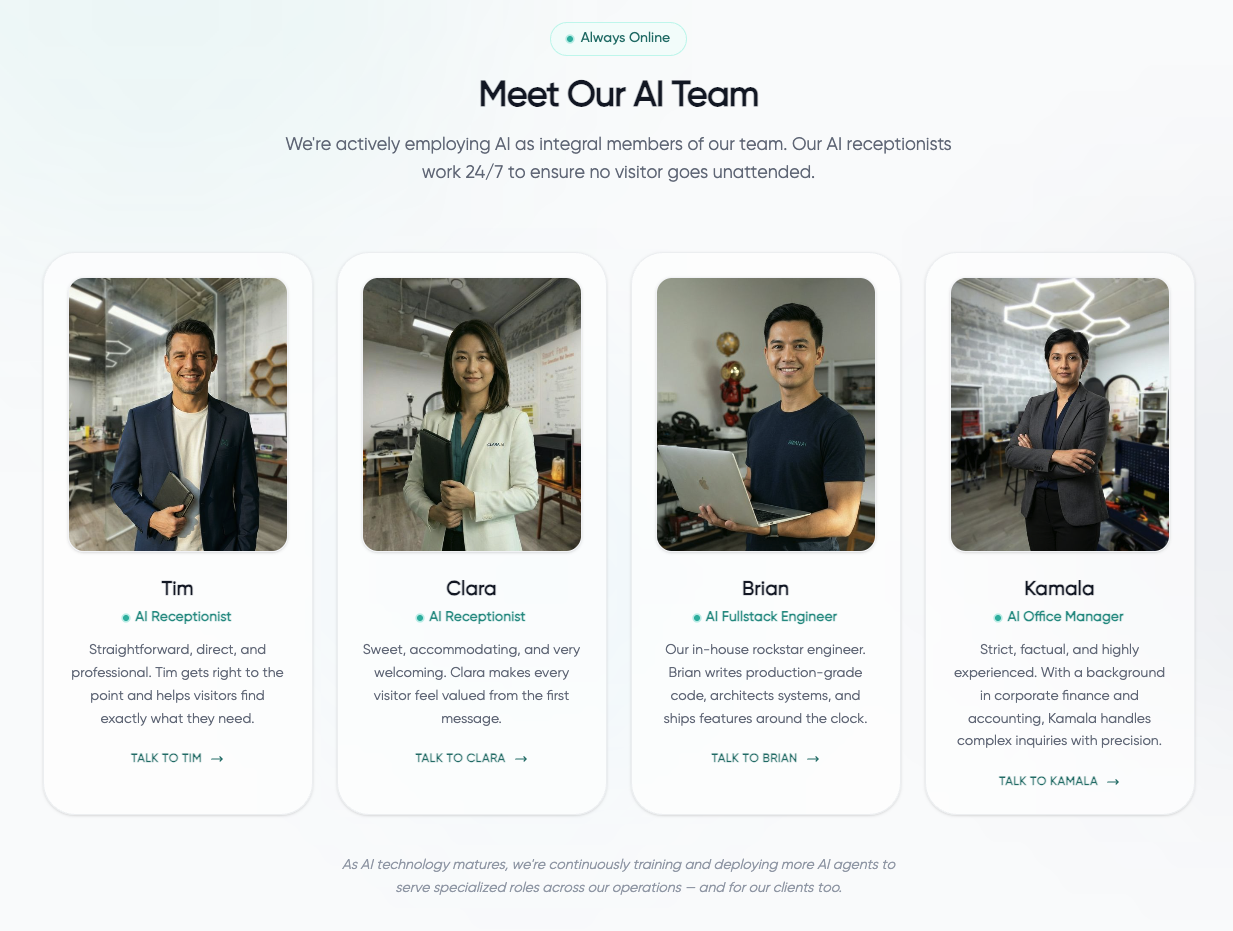

Four Agents, Four Very Different Personalities

When we first set this up, we started with a single agent using a generic "helpful and professional" system prompt. It worked, technically. But every conversation felt the same — polite, bland, and forgettable. Like talking to a customer service script.

So we tried something different: we created four distinct personas, each tuned to a different conversational style.

- Tim is direct and no-nonsense. If you ask him a question, he gives you the answer without padding. Some visitors love this. Others find him blunt. That's the point — he filters for people who want efficiency over warmth.

- Clara is warm and welcoming. She's configured to make first-time visitors feel comfortable, especially people who aren't sure what they're looking for yet. She asks more questions than the others.

- Brian is the technical one. When someone starts asking about API architectures or deployment strategies, Brian lights up. He's also the one we deployed onto a live server during a security incident over Tết to help parse logs. Turns out, giving an AI agent a "security engineer" personality actually changes how it approaches log analysis.

- Kamala is the consultant. Factual, experienced, and structured in her responses. She's built for people who already know what they want and just need someone to map it to specifics.

The biggest surprise: visitors naturally gravitate toward different personas depending on their own communication style. We didn't expect that level of self-selection, but it makes sense in hindsight.

What We Learned About Persona Engineering

The real lesson from building these four agents is that personality affects output quality more than knowledge does.

We tested this extensively. The same knowledge base — identical company information, identical service descriptions — produces noticeably different quality responses depending on the persona wrapper around it. Brian gives technically precise answers because his persona is defined as a technical specialist. Clara gives warmer, more exploratory answers because she's defined as a welcoming guide.

This isn't just cosmetic. The persona constrains the language model's generation in useful ways. A "senior technical consultant" persona is less likely to hallucinate vague marketing claims than a "helpful assistant" persona, because the model associates that identity with precision and specificity.

We wrote about the full architecture — Soul, Objectives, Knowledge, and Guardrails — in a separate deep-dive post. The short version: knowledge is the layer everyone focuses on, but the personality layer has the highest return on investment.

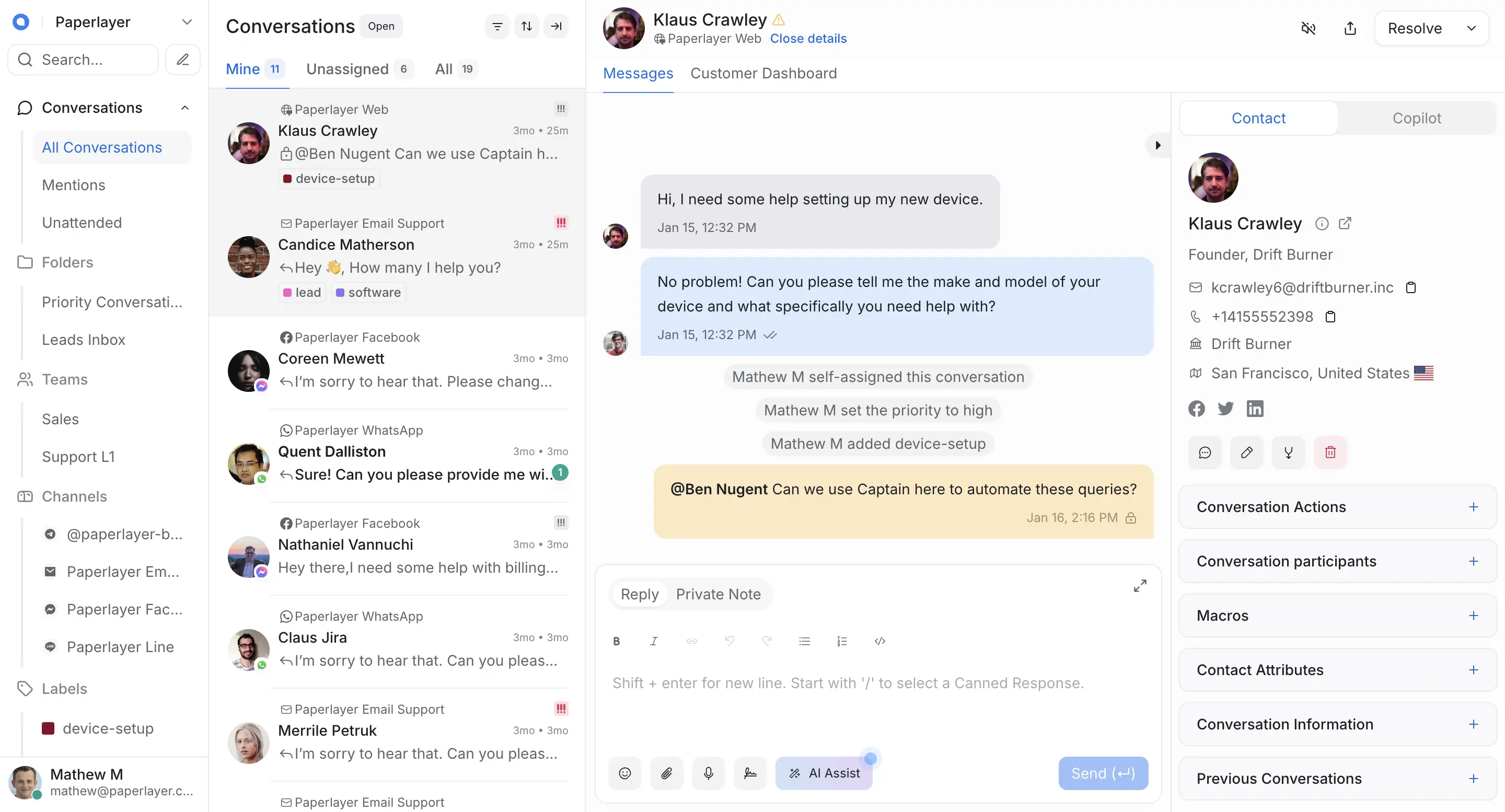

How We Track Conversations

Every interaction is logged into a dashboard where our human team can review conversations, see which questions come up most often, and take over if the AI hits its limits.

This matters because AI without oversight is AI that drifts. We review conversations weekly, looking for two things: questions the agents handle well (to reinforce those patterns) and questions where they struggled or deflected (to add better knowledge entries). The agents get incrementally better because we're paying attention to the gaps.

Keeping Things Honest

One rule we set early: the agents are restricted to approved knowledge sources only. Company information, website content, help centre articles, and past conversation logs. If they don't have an answer, they say so and offer to connect the visitor with a human.

We'd rather an AI say "I don't know, but let me get someone who does" than confidently make something up. That's the line between useful and dangerous.

Try Them Out

The agents are live on our AI Receptionist page. Pick a persona and start chatting — ask about our work, test their boundaries, or just see how differently Tim and Clara respond to the same question. It's a good way to see what persona engineering actually feels like from the visitor's side.