Everyone is talking about prompt engineering. Which model is best. Which IDE has the smartest autocomplete. Which $200/month plan gives you the most tokens.

We think most of this misses the point.

At Alpha Bits, the most important idea behind everything we build isn't a tool, a framework, or a model. It's a way of thinking: Data-First Principle Thinking — the belief that raw data, properly collected and structured, will lead you to conclusions that speculation alone won't.

Scientists call it the empirical method. Analysts call it evidence-based decision-making. We just gave it a name that fits how we actually work — across software, hardware, energy research, and AI — and built a company around it.

The Problem: Tool Fatigue Is a Symptom, Not a Disease

If you're a developer or business leader in 2026, you're drowning in AI tools. New models drop weekly. IDEs get "AI-powered" overnight. Every vendor promises that their platform is the one that will transform your workflow.

But most teams using these tools still get mediocre results.

The reason is simple: they're skipping the foundation. They feed AI tools unstructured context, vague instructions, and scattered data — then blame the model when the output is generic.

The useful output from AI doesn't come from the prompt. It comes from the context you feed it.

We think of this as the shift from prompt engineering to context engineering. Tools will change every quarter. Your internal knowledge, your data structures, your documented decisions — those accumulate and get more valuable over time.

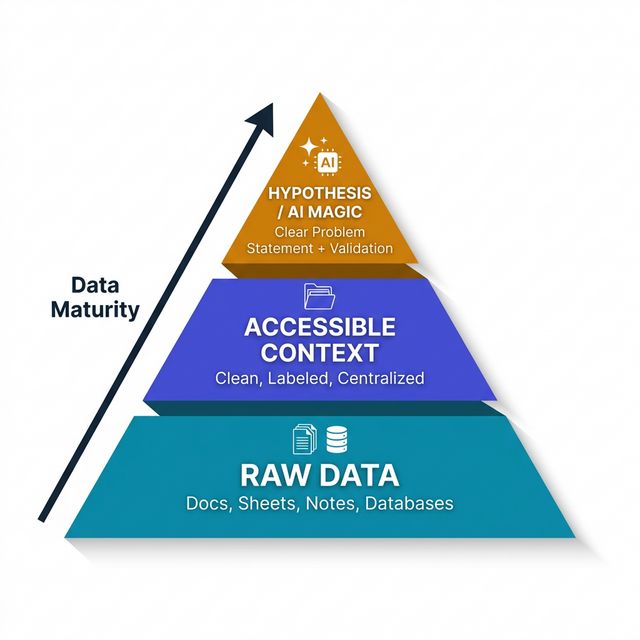

The Data Pyramid

This is the framework we use for every project, every experiment, and every business decision at Alpha Bits:

The pyramid is simple. Moving up it is not. Here's how it plays out in practice — using an IoT monitoring project as an example:

Layer 1 — Raw Data: The team needs to monitor energy usage across multiple sites. Before writing any code, they list the exact data fields required: timestamp, site_id, sensor_type, temperature_celsius, power_draw_watts, battery_voltage, device_firmware_version. Seven fields. Not "some sensor data" — the specific fields needed to answer the question "Is our system losing efficiency overnight?"

Layer 2 — Accessible Context: The raw sensor readings are flowing into a database, but they're messy — different sensors use different units, some devices report every 5 seconds, others every 30. The team builds a Data Dictionary that standardises everything: all temperatures in Celsius, all power in watts, all timestamps in UTC. They set up a central InfluxDB with Grafana dashboards. Now anyone — human or AI — can query the data without first spending two hours figuring out what temp_3 means.

Layer 3 — Hypothesis / AI Magic: With clean, labelled data in one place, the team can finally ask the real question: "Which sites are losing more than 5% thermal efficiency during overnight discharge cycles, and does the pattern correlate with ambient temperature or firmware version?" Feed that hypothesis plus the structured data to an AI tool, and you get a specific, actionable answer — not a generic essay about thermal efficiency.

Getting through these layers takes weeks. But every future project that touches the same data inherits the structure you built. The work pays forward.

Level 1: Raw Data (The Base)

Before you touch an AI tool, ask: What raw data fields are required to make a conclusion?

This sounds obvious. It's not. Most teams skip this step entirely. They jump into analysis with whatever data happens to be convenient, rather than identifying what data is actually necessary.

What this looks like in practice:

- Before building an IoT monitoring system, we list every sensor reading we need: temperature, humidity, power draw, timestamps, device IDs, location tags. Not "some sensor data" — the specific fields.

- Before optimising a client's operations, we audit their existing data sources. Five different POS systems across 200 outlets? We start by mapping every field in every system before writing a single line of integration code.

- Before debugging a production incident, we collect logs, error traces, network captures, and timeline data before forming hypotheses about what went wrong.

The point is straightforward: if you don't have the raw data, you can't draw conclusions. No AI model compensates for missing data. Document what you need first. Collect it second. Analyse it third.

Level 2: Accessible Context (The Middle)

Raw data is useless if it's scattered across fifteen spreadsheets, three messaging apps, and someone's memory.

The second level is making your data clean, properly labelled, and accessible from one central location.

At Alpha Bits, we enforce this with a few habits:

The Data Dictionary: Every project gets a living document that defines what each data field means — its source, its format, its update frequency, and who owns it. When an AI tool needs context about our codebase, our infrastructure, or our client's business logic, this document is the first thing it reads.

Centralised documentation: Every SOP, every architecture decision, every meeting outcome gets written down in a shared, searchable location. Not in Slack threads. Not in email chains. In documents that AI tools can actually access and reference.

Clean labelling: We name things precisely. A database column called temp could mean temperature, temporary, or template. We don't allow that ambiguity. sensor_temperature_celsius, is_temporary, email_template_id — specificity is a gift to your future self and to every AI tool that will ever read your schema.

This level is tedious and extremely valuable. Most teams skip it because it feels like overhead. We've learned the hard way that every hour spent on data organisation saves ten hours of confused AI output and debugging later.

Level 3: Hypothesis and Validation (The Peak)

Only after the base and middle layers are solid do you reach the part most people start with: asking AI to do something useful.

At this level, you define clear problem statements or hypotheses that require validation against your actual data:

- "Based on last quarter's sensor readings, is our thermal storage system losing more than 5% efficiency during overnight cycles?"

- "Which of our client's 200 outlets has the highest variance between POS-reported revenue and bank settlement amounts?"

- "Given the last 90 days of Node-RED flow logs, which automation sequences have the highest failure rate?"

These aren't vague prompts. They're testable hypotheses grounded in structured data. The AI's job is to process the data and surface patterns — not to guess what you meant.

This is where the work pays off. Not because the AI is doing anything special, but because the context you've provided makes it hard for the AI to give you a generic answer. It responds with specifics because specifics are all you've given it.

Real Examples: How DFPT Works in Practice

The Accidental Invention

In late 2022, one of our team started a thermal energy storage experiment — a sand battery prototype on an apartment balcony in Saigon. On paper, this person had no business doing energy research. Their background was software — SQL queries, ERP systems, data pipelines.

But they approached it the same way they'd approach a database problem: data first.

Every experiment was instrumented with IoT sensors. Every temperature reading, every power input, every heating cycle was logged with timestamps to a local database. Not because they knew what they were looking for, but because they knew that having the raw data would eventually reveal something.

It did. Within months, the patterns in the data pointed to a thermal isolation technique that nobody in the existing literature had documented. That technique became a US patent. The whole thing was DFPT in action — not genius, not luck, just methodical data collection that made the insight visible.

You don't need to be a domain expert to find something new. You need to collect the right data and pay attention to what it shows you.

The Security Incident

A production system was hit by malware — an "I Love You" variant — at the beginning of a week-long holiday. The team wasn't available. The founder was alone.

Traditional response would be panic, guesswork, or waiting until Monday. Instead, the approach was DFPT: collect the raw data first.

Within hours — with AI tools processing hundreds of thousands of log lines — the attack vector was identified, the compromised entry point was pinpointed with exact timestamps, and a patch was deployed. The AI didn't "know" what happened. But fed properly structured server logs, network captures, and access records, it found the needle in the haystack fast.

The takeaway isn't "use AI for security." It's that structured, centralised logs make any analysis tool — AI or human — effective when it matters.

The F&B Data Unification

A multi-brand food and beverage chain operating 200+ outlets across a Southeast Asian country came to us with a familiar problem: they had data everywhere and insight nowhere.

Five different POS systems. Manual daily reports from store managers. Revenue reconciliation happening in spreadsheets that hadn't been audited in months. The CEO's dashboard showed one number; the CFO's showed another; the store managers' daily reports showed a third.

Most consultants would have started with a tool recommendation — "You need Tableau" or "Let's implement Power BI." We started with the Data Dictionary.

We spent three weeks doing nothing but mapping every data field across every system. What each POS called a "transaction." How each outlet reported "daily revenue." Which fields were auto-generated, which were manually entered, which were calculated. The taxonomy alone filled a 40-page document.

Once the data was clean and accessible in one place, the insights were obvious. No AI required for the first round — the discrepancies were visible to the naked eye. AI came in for the second round: pattern detection across 200 outlets, anomaly flagging, and predictive inventory recommendations.

Before you automate analysis, understand what you're analysing. The Data Dictionary is the most boring and most useful document we produce.

The AI Team Simulation

Recently, we built an interactive thermal simulation — a complex physics visualisation that lets users explore how sand batteries charge and discharge. The project was completed in a few hours by an entirely AI-powered team:

- NotebookLM acted as the Lead Researcher, consolidating years of past experimental data and research notes into a structured brief

- Google Stitch served as the UI Designer, generating the interface components

- Antigravity was the Full-Stack Developer, writing the simulation logic

This only worked because the raw data was already structured from years of DFPT habits. The research notes were documented. The experimental results were in databases. The physics parameters were catalogued in a Data Dictionary.

Without that foundation, the AI team would have produced a generic, inaccurate simulation. With it, they produced something that domain experts validated as technically accurate.

AI teams are only as good as the data context they inherit. DFPT isn't just about the current project — the structured data you build now feeds every future project that touches it.

How to Start Thinking Data-First

If you want to try this, here's where to actually begin:

0. Start With Pen and Paper

I know I just spent 2,000 words talking about data structures and AI context. But seriously: don't open a single app yet.

Get a notebook. Any notebook. For one week, write down your daily journal and your to-do list by hand. What you worked on. What decisions you made. What's blocking you. That's it.

If pen and paper feels too analogue, use the Notes app on your phone. It doesn't matter. What matters is that you do it every day without exception.

After a month, you'll notice a change. Not because of the tool, but because the habit of writing things down forces you to separate what actually happened from what you think happened. That gap is where DFPT lives.

The Data Dictionaries and centralised documentation come later. The habit comes first. You can't structure data you never bothered to capture.

1. Then Start a Data Dictionary

Pick one project, one system, one workflow. Document every data field: what it's called, where it comes from, how often it updates, who owns it. This single document will transform how you — and any AI tool — interact with that system.

2. Track Everything in Writing

Every SOP, every new feature decision, every meeting action item — write it down in a shared, searchable place. Not in chat messages. Not in your head. In documents.

This isn't bureaucracy. This is building the context layer that makes AI actually useful. Next time you need an AI tool to help with a decision, you'll have six months of documented context to feed it instead of a vague prompt from memory.

3. Define Hypotheses Before Opening AI Tools

Before you type a prompt, write down what you're trying to learn. What specific question are you answering? What data would prove or disprove your hypothesis? What format do you want the answer in?

This three-sentence exercise — done before you interact with any AI — will improve your output quality more than any model upgrade or subscription tier.

4. Audit Your Data Gaps

The most useful thing you can discover is what data you're not collecting. Run a gap analysis: for every decision you make regularly, ask "What data am I using to make this decision? Is it complete? Is it current? Is it accessible?"

The gaps you find will tell you exactly where to invest next.

Context Engineering > Prompt Engineering

The industry is obsessed with prompt engineering — crafting the perfect instruction to squeeze better output from a model. Better prompts do help.

But prompt engineering has a ceiling. Context engineering keeps paying off the more you invest in it.

A well-crafted prompt with no data context still produces a generic answer. A rough prompt with rich, structured data context produces a specific, useful answer. We've seen this play out hundreds of times across projects.

This is why we spend more time organising our data, documenting our decisions, and building Data Dictionaries than we spend tweaking prompts. The context does the heavy lifting. The prompt just points the AI in the right direction.

The Deeper Truth

Data-First Principle Thinking isn't really about data. It's about honesty.

It's saying "I don't know yet — let me look at the data" instead of "I think I know — let me find data that confirms it." It's writing things down even when no one is checking. It's letting the numbers contradict your assumptions when they do.

We built Alpha Bits on this. It led us to inventions we had no business making, insights we didn't have the expertise to predict, and products that work because they're grounded in what the data actually says.

The tools will keep changing. The models will keep improving. But the habit of collecting, structuring, and reasoning from data — that doesn't go out of date.

Start there.

Data-First Principle Thinking is the operating philosophy behind everything at Alpha Bits — from thermal energy research to AI-powered development. We write about it because we think the framework is more useful when it's shared.